The pipeline is built — now does it change anything on site? This post moves from architecture to outcomes. Using the twin pipeline from Part 1, we walk through four real decision loops: formwork release driven by concrete maturity, crane stop-work alerts under combined wind and load, dynamic safety zone enforcement via BLE tracking, and intra-day schedule re-optimization from live progress data. We also cover implementation strategy, stack selection, and closing the loop into planning and safety systems. We close with a forward look at agentic AI on the twin — from decision support to autonomous operational co-pilot.

Part 1 of this series established the technical foundation: how to ingest real-time IoT sensor data, enrich it with BIM semantics via IRI mapping and RDF triples, maintain a live twin state store, and serve it at operational latency. Part 2 puts that pipeline to work.

This post answers the question that matters most to practitioners: does any of this actually change what people decide and do on a construction site? The answer is yes — but only when the twin is connected to the systems and workflows that govern real field actions.

Across four concrete scenarios — formwork release timing driven by maturity index, crane stop-work decisions under combined wind and load, continuous safety zone enforcement via BLE tracking, and intra-day schedule re-optimization from live progress data — we show how real-time twin state replaces fixed rules, periodic inspections, and intuition-based planning with data-driven, auditable, automated decision loops.

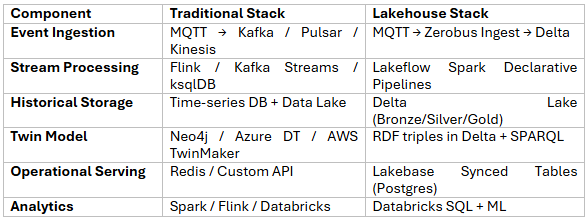

The second half of this post covers implementation strategy: why you should start narrow and deep with one high-impact loop rather than twinning the whole project, how to manage data quality and sensor-to-asset mapping as a safety-critical concern, how to choose between traditional (Kafka/Neo4j/Redis) and lakehouse (Zerobus Ingest/Delta/Lakebase) stacks, and how to close the loop back into CDEs, planning tools, and safety systems — because a twin that doesn't write back into operational systems creates reports, not outcomes.

The post closes with a forward look at agentic AI on the twin: autonomous agents that don't just surface recommendations but negotiate schedule changes, request permits, and coordinate resources — turning the digital twin from a decision support tool into an active operational participant.

Now, to the core of your request: how real-time data + twin pipeline changes decisions—not just visualization.

Traditional approach

With IoT + Twin

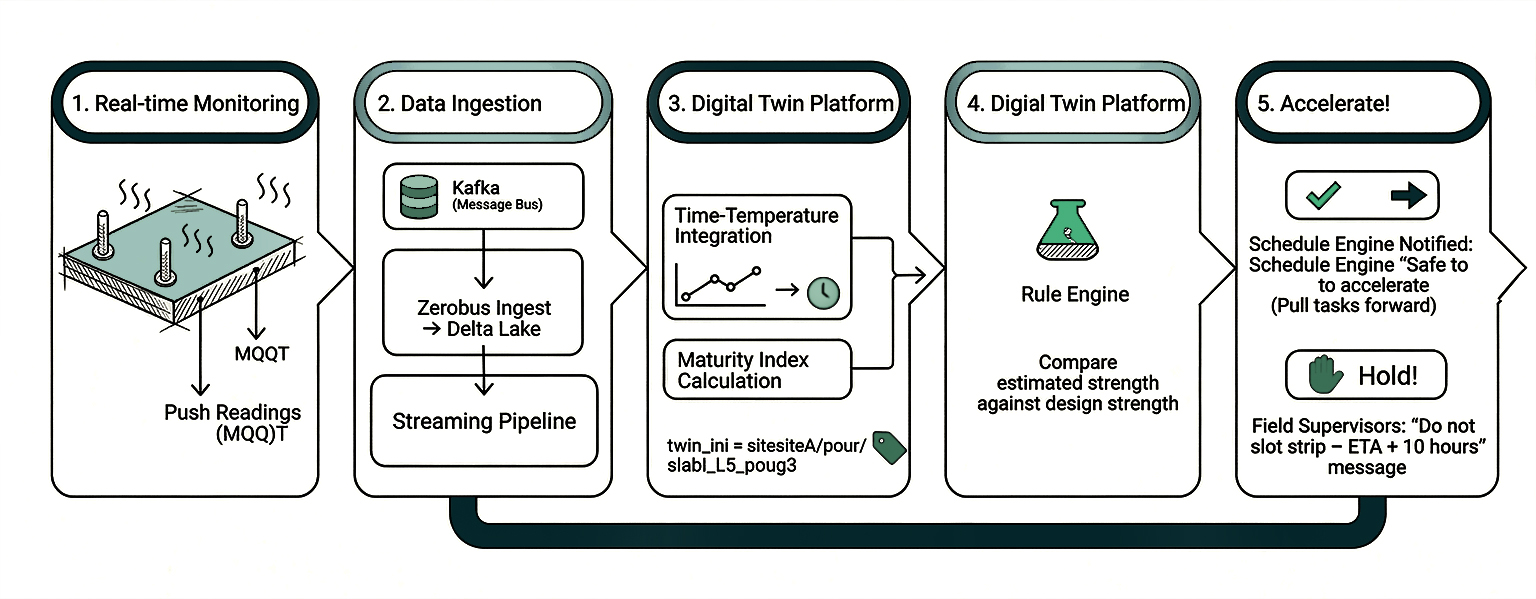

1. Temperature sensors embedded in slabs and columns push readings every few minutes via MQTT → Kafka (or Zerobus Ingest → Delta).

2. The streaming pipeline calculates maturity index per pour entity using time–temperature integration models.

3. Twin state for each twin_iri = site:siteA/pour/slab_L5_pour3 gets estimated_strength updated continuously.

4. A rule engine compares estimated_strength against design-required strength for tasks that depend on this pour (e.g., "erect next level formwork", "post-tensioning", "load from pallets").

5. If strength is reached earlier than planned, the schedule engine is notified to pull tasks forward, and the planner gets a "safe to accelerate" recommendation.

6. If strength is lagging, a hold is placed on those tasks, and field supervisors see a "Do not strip—ETA +10 hours" message.

Decision change: Formwork stripping and follow-on works are driven by actual curing behavior, not fixed-duration rules. This can shave days off the critical path while preserving safety.

To measure the impact, track schedule variance at pour and activity level (planned vs actual dates for formwork stripping and next-level starts) before and after maturity-based decisions are introduced. Over a few cycles, you should see variance compress from multi-day ranges to hours, alongside fewer “late strip” delays logged in the scheduling system and lower rework or defect rates related to early loading.

Traditional approach

With IoT + Twin

1. Load cells on hook and anemometers at boom tips stream data to iot.raw.equipment.crane1.*.

2. Stream jobs compute real-time load %, duty cycle, and wind speed; also cumulative fatigue usage and near-miss incidents.

3. Twin state for crane:CRANE-01 includes wind_status (OK / caution / stop), overload_events_last_7d, fatigue_index.

4. A rules engine issues an immediate stop-work alert to crane operator's HMI and safety officer when wind + load exceed combined criteria.

5. Planners use aggregated twin history (queried from Delta Lake via SPARQL or SQL) to reassign heavily stressed cranes to less demanding tasks and schedule proactive inspection/maintenance before visible problems.

Decision change: Crane usage and allocations become data-driven and risk-aware. Stop-work is not based solely on visual judgment but on continuous, logged conditions.

Impact can be quantified through leading and lagging indicators: number of overload or over‑wind alarms per 1,000 lifts, recorded crane-related near-misses, and unplanned crane downtime days. Over time, a well-tuned twin should reduce high-risk alarms and near-misses while keeping utilisation high and shifting more inspections into planned, proactive windows instead of reacting to faults.

Traditional approach

With IoT + Twin

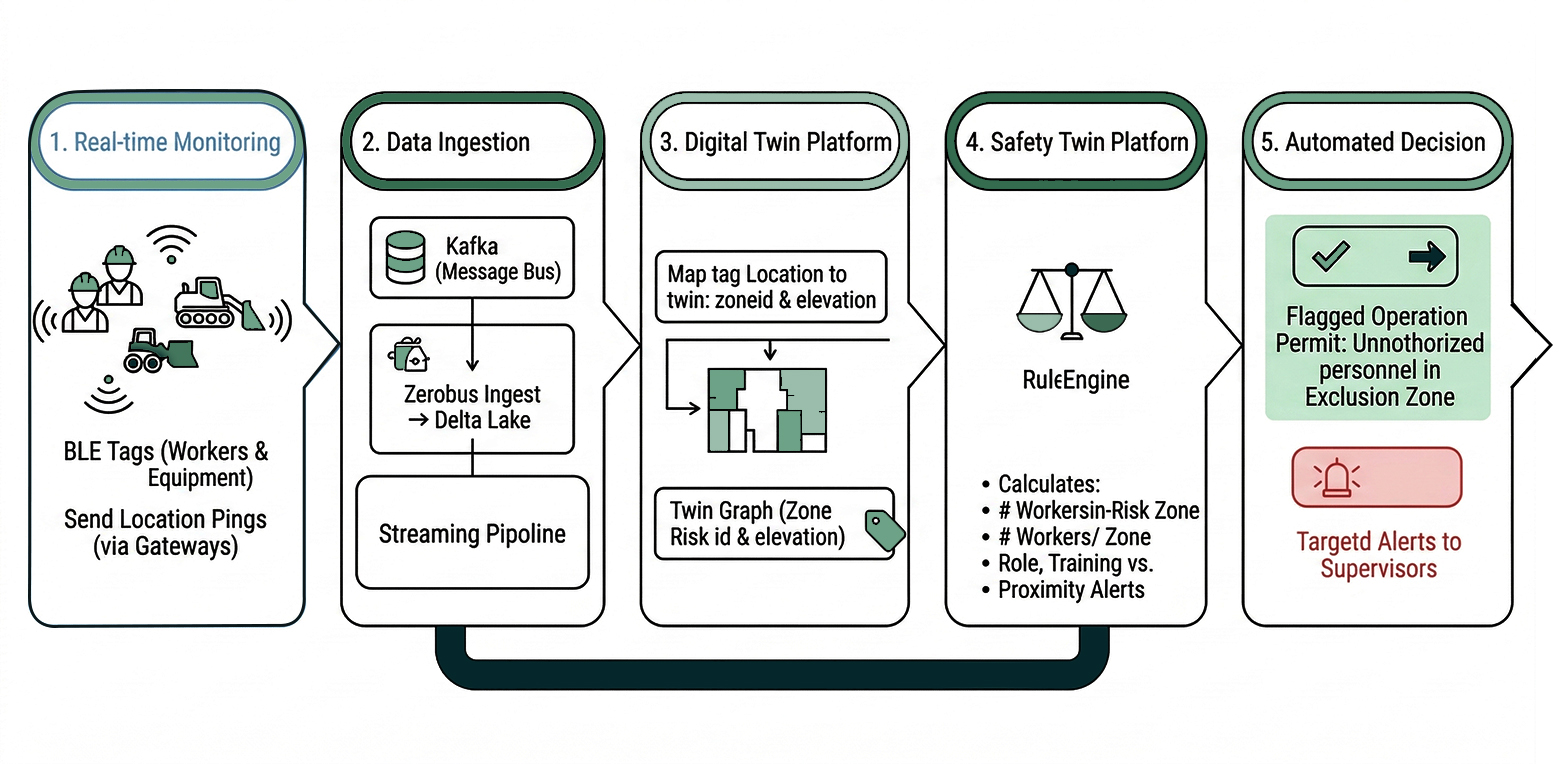

1. BLE tags on workers and equipment send location pings (via gateways → Kafka or Zerobus → Delta).

2. Stream processing maps each tag location to a twin zone_id and elevation.

3. The twin graph already knows which zones have active high-risk tasks and what safety rules apply per zone.

4. A safety rules engine calculates, in real time: number of workers per zone, role/training vs. zone authorization, proximity between workers and moving equipment.

5. If a high-risk operation starts while unauthorized workers are present in the exclusion zone, the operation permit is flagged and supervisors get targeted alerts.

Decision change: Work authorization and safety supervision become stateful and continuous, not periodic inspections.

To monitor effectiveness, track how often the system flags unauthorized entries into exclusion zones per 10,000 work-hours, how long workers spend in high-risk zones without an active permit, and how many near-misses or incidents involve locations where the twin generated a real-time alert. These metrics provide a concrete view of how dynamic zoning reduces exposure and improves your overall safety incident rate.

Consider tower crane utilization and concrete truck arrivals.

With the twin pipeline:

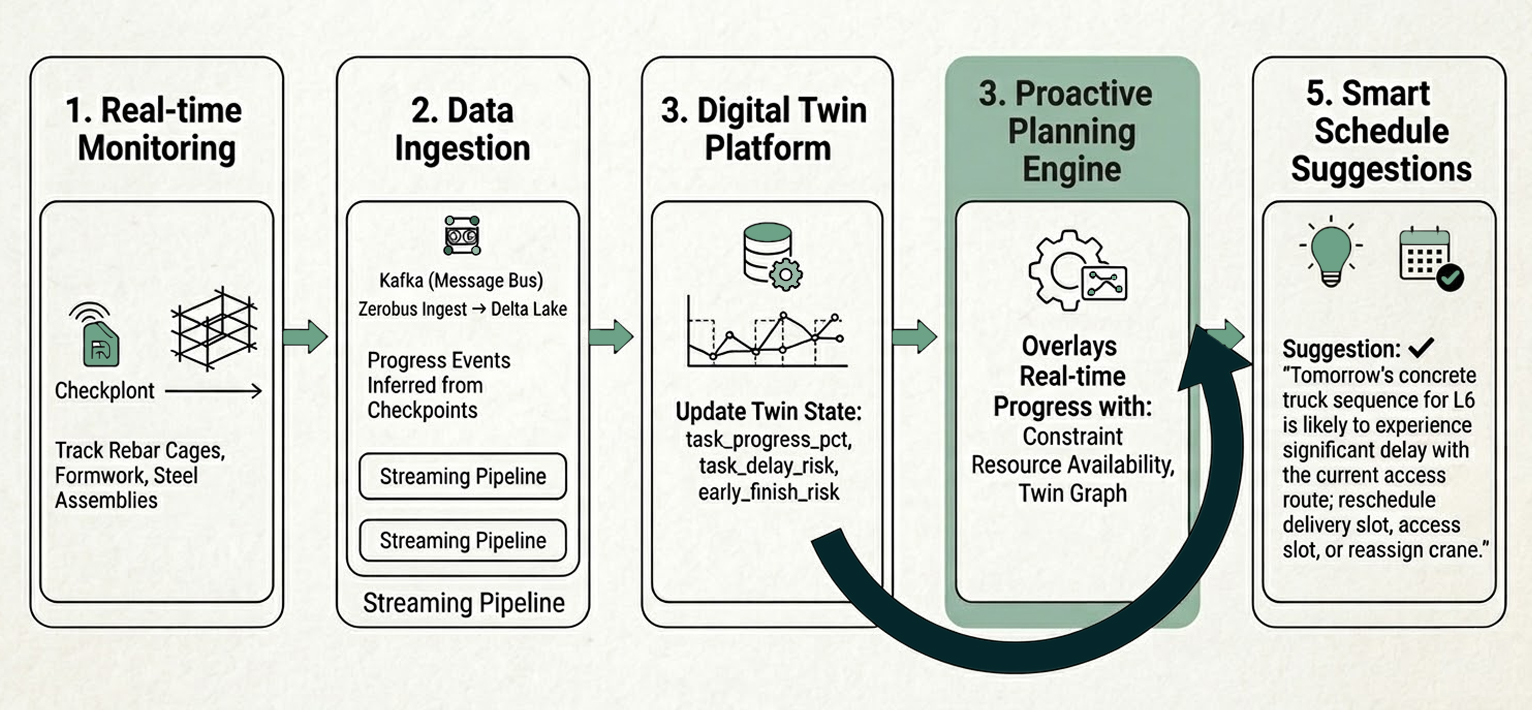

1. Real-time progress events are inferred from RFID tags on rebar cages and formwork assemblies passing checkpoints.

2. Stream jobs compute percent-complete for each task and update twin state: task.progress_pct, task.delay_risk, task.early_finish_risk.

3. The planning engine overlays this on resource availability, constraints, and the live twin graph.

4. The system suggests changes like: "Tomorrow's concrete truck sequence for L6 is likely to conflict with delayed steel delivery in the same access route; reschedule delivery slot or reassign crane."

Decision change: Daily and even intra-day planning evolves from static look-ahead meetings to continuous optimization informed by live twin state.

Measuring impact in practice

A digital twin in construction only justifies its complexity if it moves hard numbers. In practice, teams focus on a small set of KPIs: schedule variance reduction on critical activities (such as pours and crane-dependent lifts), utilisation and fatigue metrics for major equipment, and safety indicators like near-miss rates, TRIR, and zone‑breach counts. By baselining these before rollout and tracking them quarter-on-quarter, you can demonstrate that the twin is not just a new dashboard layer but a measurable driver of schedule reliability and incident mitigation.

Rather than "digitally twin the whole project," pick one or two high-impact loops:

Build complete E2E flows:

.jpg)

The Databricks Solution Accelerator provides a ready-made framework that can be adapted for construction. While it ships with a manufacturing example, the building blocks—ingestion, mapping, serving, visualization—are domain-agnostic.

Most real-world setups end up with:

Avoid overloading BIM authoring tools as the twin runtime. They're great authoring environments but not designed to be low-latency state stores or event processors.

A digital twin is not only a technology deployment; it is an organisational change. Before committing to a full rollout, teams should perform a simple digital maturity assessment across four dimensions: data, processes, people, and platforms. On the data side, assess whether existing CDEs, ERP systems, and scheduling tools expose reliable interfaces and whether BIM models and sensor inventories are complete enough to support the first decision loop. On the process side, identify where current decision-making lives : daily coordination meetings, permit workflows, safety reviews and how twin-driven insights would be embedded without creating parallel, shadow processes.

The people dimension is equally important. Supervisors, planners, and engineers must have the skills and capacity to interpret twin outputs, challenge them when they conflict with field reality, and feed corrections back into the system. This often means appointing clear owners for data quality and sensor-to-asset mapping, and training “twin champions” in each discipline.

Finally, platforms and IT: confirm that networking, security, and support models are in place for always-on telemetry, and that there is an agreed path for operating and maintaining the twin beyond the pilot. A candid view of these factors up front helps right‑size ambition starting with a narrow, deep loop where the organisation is ready, instead of attempting a programme‑wide twin that stalls on non-technical blockers.

The twin must write back into:

Only when the twin changes what those systems decide or do does it create real value.

The construction industry has spent years building richer BIM models and deploying more IoT sensors. The gap has never been the data — it has been the pipeline and decision architecture needed to make that data act.

The four scenarios in this post share a common pattern: a streaming event triggers an enriched twin state update, which fires a rule or model, which pushes a contextual recommendation or automated action into the system a supervisor, planner, or engineer is already using. That loop — sensor to state to decision to action — is what transforms a digital twin from a monitoring tool into an operational co-pilot.

The practical path forward is deliberate and incremental:

The twin's long-term value compounds as it accumulates cross-project history: curing performance across mix designs and weather conditions, crane fatigue patterns across equipment fleets, safety incident correlations across site layouts. That history trains better predictive models, benchmarks future projects, and eventually makes the twin the most valuable data asset an organization carries from project to project.

The next frontier — agentic AI on the twin — takes this further still. Agents that monitor twin state, evaluate options against schedule constraints and safety rules, and execute negotiated actions autonomously will make the construction site not just more informed, but genuinely more adaptive. The pipeline built in Part 1 and the decision loops described here are the prerequisite infrastructure for that future.

BIM provides the schema. IoT provides the pulse. The pipeline makes it matter.