Generative AI has transformed how organisations interact with information, but text-based models alone are insufficient for high-stakes enterprise environments. While Retrieval-Augmented Generation improves contextual grounding, it does not create validated, governed relationships between entities. This blog explains why defensible enterprise intelligence requires a structured semantic layer built on knowledge graphs. By modelling entities, relationships, lineage and governance constraints explicitly, organisations can enable deterministic, auditable reasoning. When generative AI operates on top of this foundation as demonstrated through Merit’s Know It All Agent (KIAA), the result is intelligence that is not only fluent, but structurally validated, traceable and enterprise-ready.

Generative artificial intelligence has significantly changed how organisations interact with information. Modern language models can summarise reports, draft responses, and answer questions with impressive fluency. In professional services, this has accelerated how teams engage with client documentation, regulatory content, contracts, and research archives.

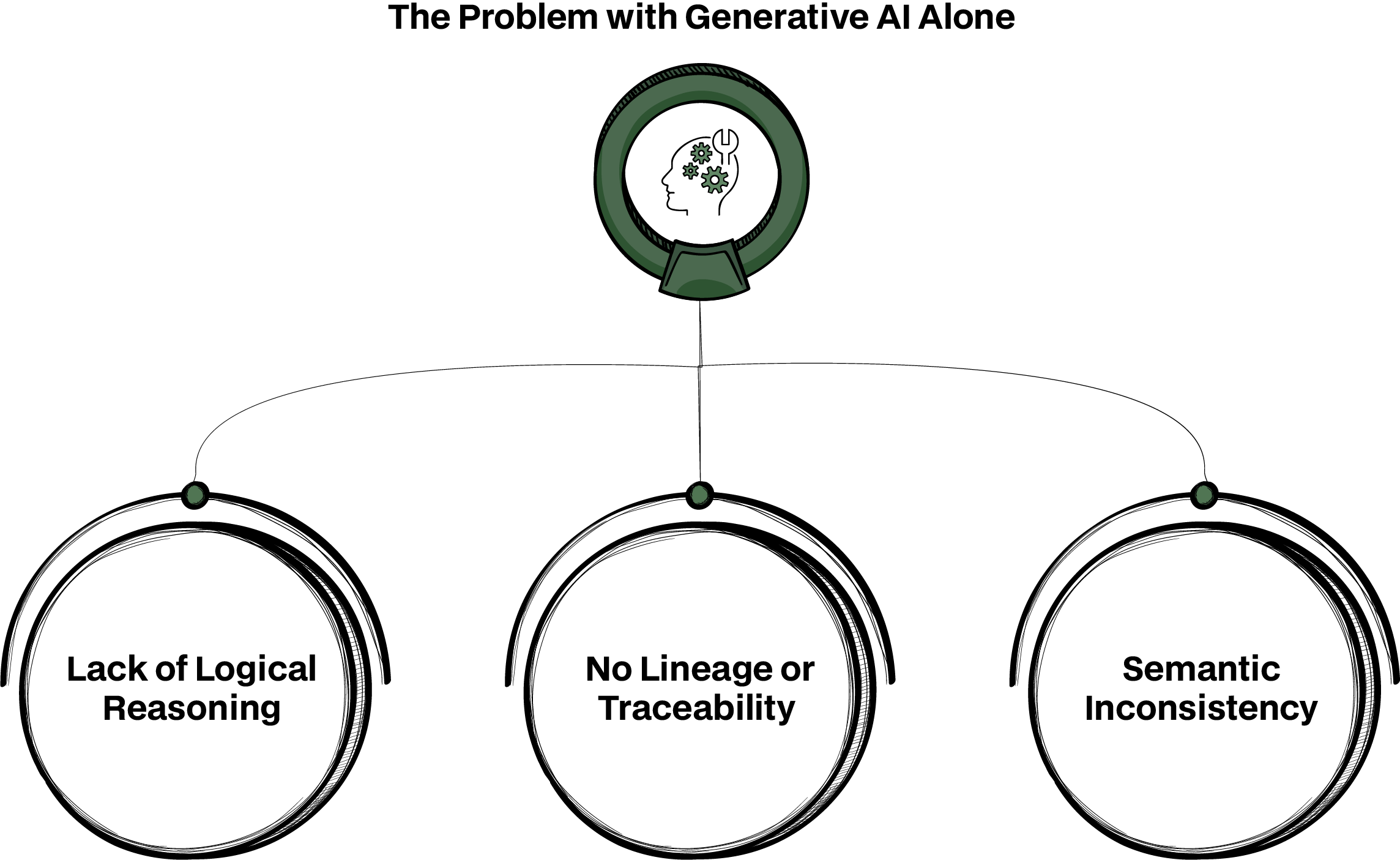

However, when these systems are applied to complex enterprise environments, limitations quickly become apparent. Generative AI is highly effective at synthesising text, but it does not inherently understand validated relationships between entities or enforce consistency across systems.

Consider a compliance review scenario. A text-only model might summarise a regulatory clause and suggest how it applies to a contract.

Even when combined with Retrieval-Augmented Generation (RAG) to pull relevant documents, the model is still primarily matching patterns in retrieved text. It does not validate whether the clause formally governs a specific contractual obligation, whether that obligation has been superseded, or how it connects to internal policy controls. The response may appear coherent, but it is not relationship-validated. RAG is often an important intermediate step.

By grounding outputs in retrieved documents, organisations reduce hallucination risk and improve contextual relevance. But retrieval alone does not create structured understanding. It surfaces documents; it does not model how regulations, clauses, policies, risk categories, and operational processes formally relate to one another. Without an underlying semantic framework, generative responses can still be incomplete, inconsistent, or difficult to defend.

In professional settings where decisions carry legal, financial, or compliance implications, this gap becomes material. Teams must demonstrate not just what an answer is, but why it is valid and how it connects to governed business logic. What organisations require is enterprise intelligence that is grounded in explicitly modelled relationships. Knowledge graphs provide this structural layer.

They represent information as entities and formally defined relationships, embed business semantics, and capture lineage and governance metadata. When a compliance question is asked in a graph-backed system, the response is not derived from text similarity alone. It is generated by traversing validated relationships: which regulation governs which clause, which clause maps to which deliverable, which policy constrains which action. The answer can be traced through these links, providing both narrative clarity and structural justification.

In this blog, we explore why text-only approaches - even when enhanced with retrieval - are insufficient for high-stakes enterprise use, how knowledge graphs introduce reasoning, lineage and governance, and how professional services organisations can combine structured intelligence with generative AI through advanced platforms such as Merit’s Know It All Agent (KIAA) to produce intelligence that is not only fluent, but defensible.

Generative AI is trained in patterns in text. It recognises statistical associations and produces responses that appear coherent because they mirror the structure of language seen in its training data. But statistical coherence is not the same as semantic understanding.

For standard conversational tasks, this limitation is acceptable. In enterprise contexts, it becomes a liability. Organisations regularly encounter several core challenges when using generative models without structured knowledge backing:

Modern language models can approximate relational reasoning through advanced prompting techniques and structured retrieval. They can generate multi-step explanations that appear logically coherent.

However, this reasoning remains probabilistic rather than deterministic. The model is not operating over a governed, organisation-defined representation of relationships. It infers connections based on patterns, not on validated, canonical links between entities.

In enterprise contexts, the limitation is not the absence of reasoning, but the absence of controlled, auditable reasoning grounded in explicitly modelled business logic.

During a regulatory impact assessment, a model may explain how a new financial conduct rule could affect client reporting obligations. Yet without a formally modelled relationship between that rule, specific contractual clauses, and internal reporting controls, the explanation cannot confirm whether the linkage reflects the firm’s governed policy structure or merely a plausible textual association.

Models do not natively provide source references, provenance or reasoning pathways. In regulated industries or consulting engagements, practitioners must show why an inference is valid and where it came from.

When responding to an internal audit query about why a risk classification was elevated, a generative system might summarise relevant documents. But unless it can trace the conclusion back to specific policy versions, contract amendments, and dated regulatory updates, the answer cannot be defended in a formal audit review.

A single entity might be mentioned in multiple ways across documents and systems. Without canonical reconciliation, generative outputs can conflict or provide ambiguous answers.

A client entity may appear as “ABC Holdings Ltd” in a contract repository, “ABC Group” in a CRM system, and under a legacy identifier in a risk database. Without entity resolution and canonical mapping, a model may treat these as separate entities, leading to incomplete exposure analysis or inconsistent reporting across engagements.

These issues surface acutely in professional services contexts, where queries are seldom about simple facts and often about relationships; for example, how a regulatory clause affects contractual obligations across jurisdictions, or how interdependencies between policies influence risk assessments.

When generative AI is used in isolation, organisations are left with plausible but unreliable outputs. The need for structure is not hypothetical; it is operational.

Knowledge graphs do not merely store data. In enterprise environments, they are typically implemented as ontology-backed semantic layers built on graph databases or RDF triple stores. Entities are represented as nodes, relationships as edges or triples, and both are governed by explicit schema definitions, controlled vocabularies, and validation constraints.

Rather than relying on inferred language patterns, this structure encodes formally defined relationships that reflect business logic. Ontologies define what types of entities exist (e.g. Regulation, Contract Clause, Policy, Risk Category), what relationships are permitted between them (e.g. governs, references, supersedes, impacts), and which constraints must hold true.

These constraints can be enforced through schema validation, business rules, SHACL validation layers, or rule-based inference engines depending on the implementation. The graph does not “reason” autonomously in a human sense; instead, it enables deterministic traversal and rule-driven inference over explicitly modelled relationships.

This architectural approach provides three practical capabilities that text-only systems cannot reliably enforce.

Entities and relationships are explicitly modelled and validated against a schema. Queries traverse only permitted relationship types, and rule engines can enforce domain logic such as “a regulatory clause must map to an approved policy before affecting a contractual obligation.”

For example, when assessing regulatory impact, traversal logic can follow a governed path: Regulation → Policy Control → Contract Clause → Risk Classification.

Each step is defined in the ontology and validated against business constraints. The output is not simply a plausible explanation; it reflects permitted and validated structural relationships.

Graph implementations typically store provenance metadata at node and edge level - including source document references, timestamps, version identifiers, and transformation histories.

Because relationships are explicitly stored rather than inferred at query time, the traversal path itself becomes part of the audit trail. A compliance query can therefore return not only a conclusion, but the precise entities, relationships, rule evaluations, and source artefacts that produced it.

This moves explainability from narrative justification to structural traceability.

In enterprise graphs, entity resolution is not an afterthought. Canonical identifiers are assigned through reconciliation processes, and naming conventions are governed through controlled vocabularies and taxonomy alignment.

Schema constraints prevent duplicate representations of the same entity type, and validation rules ensure relationship consistency.

As a result, when multiple systems refer to the same client, clause, or regulatory instrument under different labels, the graph consolidates them into a governed representation. Queries operate over canonical entities rather than fragmented references, reducing inconsistency and ambiguity.

The key distinction is this: knowledge graphs do not automatically “solve” reasoning. What they provide is a constrained semantic environment where reasoning can be made deterministic, rule-governed, and auditable.

When generative AI operates on top of such a governed semantic layer, it is no longer synthesising relationships from text patterns alone. It is articulating insights derived from explicitly modelled, validated and traceable enterprise logic.

To illustrate how structure changes the quality of intelligence, consider a scenario common in professional services: synthesising insights from a large corpus of client contracts, industry regulations and internal policies.

A generative model alone might produce a reasonable summary when asked about compliance requirements, but it cannot reliably explain how a clause in a regulatory regime, maps to specific contractual obligations or how overlapping jurisdictions interact. It lacks a semantic backbone that ties entities together.

A knowledge graph, on the other hand, encodes entities such as “Regulation 23(b)(ii)”, “Clause 12.4 of Contract XYZ”, and “Risk Category Alpha”, and explicitly defines relationships like governs, is referenced in, impacts, or is subordinate to. When a generative AI queries this graph through a platform like KIAA (Know It All Agent), it can trace paths through the graph to provide answers grounded in structural relationships. The response can then include not just narrative, but the reasoning pathway and source lineage that support it.

For example, a KIAA response might explain that Regulation 23(b)(ii) constrains practices in Clause 12.4, which in turn affects deliverable timelines under Contract XYZ, and that this linkage aligns with internal policy P-457 and prior audit classifications. The relationships themselves are defined and validated within the graph; the generative layer articulates them in usable language.

This distinction is important. The system is not independently “reasoning” in an unconstrained way. It is synthesising structured, schema-governed graph traversals into narrative form.

The reliability comes from the underlying semantic model; the fluency comes from the generative layer layered on top of it.

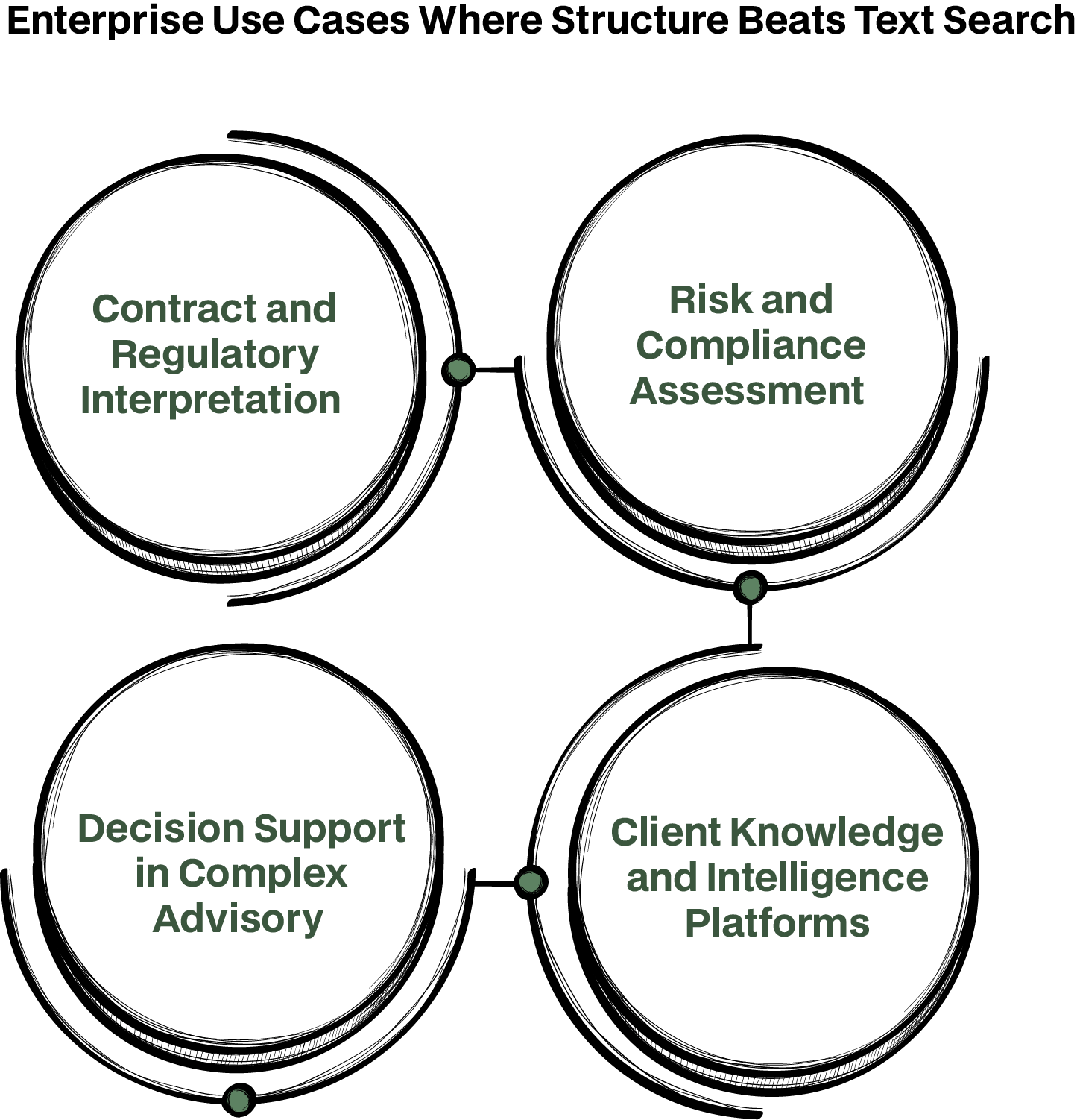

There are numerous scenarios where relationships, explainability, and consistency matter more than keyword retrieval and summarisation. The distinction becomes clear when contrasting what traditional search returns versus what a graph-backed query produces.

A traditional search system retrieves documents containing relevant keywords and may summarise five clauses referencing a regulatory term. The output highlights text similarity but does not determine whether those clauses are formally governed by the same regulation, whether one supersedes another, or whether cross-jurisdictional conflicts exist.

A graph query, by contrast, traverses explicitly modelled relationships: Regulation → Jurisdiction → Policy Mapping → Contract Clause → Amendment Status.

Instead of listing clauses with similar wording, it identifies which clauses are formally governed by the regulation, which are exceptions, and where jurisdictional overlaps create compliance risk.

Operational impact: Reduced contract review cycles, fewer interpretation discrepancies between teams, and faster identification of regulatory conflicts.

Search-based systems retrieve documents referencing specific risk terms. A summarisation layer may describe risk factors mentioned across policies and reports.

A graph query evaluates structured relationships between regulatory obligations, control frameworks, client classifications, and operational processes. It can surface how a regulatory change propagates through specific controls and into client-level exposure categories — based on defined mappings rather than textual overlap.

Operational impact: More consistent risk categorisation, fewer post-audit corrections, and clearer traceability during compliance reviews.

Text search aggregates CRM entries, engagement notes, and survey results containing a client’s name or related keywords. The result is document retrieval rather than consolidated intelligence.

A graph-backed query reconciles canonical client identifiers, resolves entity variations across systems, and traverses engagement history, contractual exposure, risk tier, and advisory themes through governed relationships.

The output is not a collection of documents, but a unified client intelligence view grounded in reconciled entities.

Operational impact: Improved cross-team consistency, reduced duplication of effort, and more evidence-based client strategy development.

In strategy, M&A, or litigation support, search retrieves precedent documents and prior case summaries. Generative summarisation produces high-level thematic insights.

A graph query navigates taxonomies of sectors, regulatory regimes, contractual archetypes, and historical outcomes.

It surfaces structurally comparable cases, highlights relationship-driven risk factors, and identifies where governance rules were decisive in prior engagements. The generative layer then synthesises these structured results into a narrative recommendation, grounded in modelled relationships rather than thematic similarity alone.

Operational impact: Faster scenario analysis, greater cross-engagement consistency, and more defensible advisory outputs.

Across these use cases, unstructured text retrieval provides access. Structured graph queries provide validated context. Search tells you where a term appears. A governed knowledge graph tells you how entities are formally connected — and whether those connections satisfy organisational rules.

That difference translates directly into operational efficiency, compliance assurance, and decision confidence.

Constructing an enterprise knowledge graph requires more than ad-hoc schema design. It requires disciplined data engineering, semantic modelling, and governance controls that ensure consistency as the graph evolves.

The first step is entity identification and extraction. Entities such as regulations, contract clauses, risk categories, clients, policies and operational processes must be extracted from structured systems (e.g. CRM, risk platforms, contract repositories) and unstructured artefacts (e.g. PDFs, regulatory circulars, advisory reports).

In practice, this involves a combination of NLP-based entity recognition, domain-specific extraction rules, and taxonomy alignment. Extracted entities are mapped to predefined ontology classes (e.g. Regulation, Clause, Policy Control, Client Entity) rather than stored as free-form text labels.

This ensures that new entities enter the graph within a controlled semantic framework, rather than creating uncontrolled type proliferation.

Different systems often refer to the same real-world entity using different identifiers, naming conventions, or legacy codes. Entity resolution processes reconcile these variations into canonical identifiers.

Resolution may combine deterministic matching (e.g. legal entity IDs, regulatory codes) with probabilistic similarity scoring, followed by rule-based validation. Once reconciled, canonical IDs are enforced at schema level so that duplicate entity creation is prevented.

Controlled vocabularies and taxonomy governance ensure that entity classifications remain consistent. New entity types or attributes must conform to the ontology schema before being admitted into the graph.

Once entities are aligned, relationship modelling defines how they connect. These relationships are not inferred dynamically from text; they are explicitly defined in the ontology and validated against permitted relationship types.

For example:

Relationship constraints are enforced through schema definitions and validation mechanisms (such as SHACL constraints, schema validation rules, or business-rule engines). This prevents structurally invalid connections — for example, linking a Risk Category directly to a Jurisdiction without an intermediate regulatory mapping if the ontology prohibits that path.

Inference, where used, is rule-driven rather than generative. For example, if Regulation A governs Policy B, and Policy B applies to Clause C, a rule engine may derive a governed impact between Regulation A and Clause C — but only if explicitly permitted by defined logic.

This ensures that reasoning pathways are deterministic and policy-aligned.

Lineage is not added retrospectively. It is embedded at node and edge level. Each entity and relationship can store:

Provenance metadata allows organisations to trace not only which documents informed an entity, but also which transformation rules were applied during ingestion.

Access controls and role-based governance layers ensure that sensitive relationships (e.g. client exposure mappings) are visible only to authorised users. Schema evolution is managed through controlled change processes to prevent semantic drift.

When generative layers such as KIAA operate on top of this foundation, they are not querying a loose collection of linked text. They are interacting with a governed semantic system where entities are validated, relationships are schema-constrained, and lineage is preserved.

The generative component synthesises structured traversal results into narrative form, but the integrity of the response depends on the disciplined engineering of the structural layer beneath it.

KIAA, Merit’s Know It All Agent, exemplifies how generative AI and knowledge graphs can be combined to deliver enterprise intelligence that is both usable and reliable. KIAA does not operate in isolation. It queries structured, ontology-governed knowledge representations, traverses validated relationships, and synthesises responses that can be traced back to their semantic origins.

Unlike generative systems that rely solely on language patterns, KIAA operates within schema-defined constraints.

Relationship traversals follow permitted ontology paths, business rules govern inference logic, and provenance metadata is preserved at entity and relationship level. The generative layer does not invent structural links; it articulates results derived from validated graph queries.

This means responses include not only narrative explanation, but context, constraint awareness, and justification traces such as which regulatory node was traversed, which policy mapping rule applied, and which source documents underpin the connection. In professional settings where decisions must be defended internally and externally, this level of structural transparency is critical.

KIAA also supports continuous refinement of the underlying knowledge graph through a combination of governed processes:

This hybrid model ensures the graph evolves with the organisation while remaining schema-governed and auditable. The intelligence layer is therefore not static. It adapts to new regulations, client structures, contractual forms, and policy updates - without compromising structural integrity.

Through this combination of governed semantics and controlled refinement, KIAA enables generative interaction that remains aligned with enterprise logic rather than drifting into pattern-based approximation.

Generative AI has expanded what organisations think is possible. But without structure and governance, it is not sufficient for enterprise decision-making. Knowledge graphs provide the semantic, relational and lineage foundation that generative models need to deliver intelligence you can trust.

In environments where interpretation, traceability and cross-domain consistency are critical, structured semantic layers such as knowledge graphs provide a more robust foundation than text-only systems.

When generative AI is built on top of a governed, semantically rich knowledge layer as enabled by solutions such as Merit’s KIAA, organisations can finally achieve both fluency and dependability in their enterprise intelligence.

At Merit, generative AI is not deployed as a standalone conversational interface layered over document repositories. It is implemented as part of a structured intelligence architecture where generative components operate over a governed semantic layer.

KIAA, our Know It All Agent, is built on an ontology-driven knowledge graph supported by graph-backed retrieval. Instead of retrieving documents based solely on keyword similarity, KIAA executes structured graph queries against canonical entities and validated relationships.

During ingestion, documents, contracts, policies, and client artefacts are processed through semantic extraction pipelines. Entities are mapped to predefined ontology classes, reconciled through entity resolution workflows, and validated against schema constraints. Relationships are permitted only where defined by the ontology and enforced through rule-based validation mechanisms.

When a user queries regulatory obligations, contractual dependencies, or risk exposures, KIAA performs graph traversal within these governed constraints. The generative layer synthesises the traversal results into narrative form, but the relationships themselves are derived from schema-defined, rule-enforced connections.

Lineage is embedded at entity and edge level. Each node and relationship can store provenance metadata including source references, version identifiers, extraction timestamps, and transformation logs. Query execution paths are recorded, enabling audit trail logging that documents how a specific conclusion was constructed.

Governance controls are implemented through:

Where inference is required, ontology-driven reasoning and rule engines operate over defined relationship types rather than allowing unconstrained generative interpretation. For professional services firms, this architecture supports defensible outputs. The generative layer provides accessibility and usability; the graph-backed semantic layer provides determinism, validation, and traceability.

The result is not conversational AI applied to documents, but a governed intelligence system capable of supporting compliance reviews, advisory workflows, and regulated decision-making environments.